Whether you’ve been shopping for a new TV, or you just bought one, you might have seen the term “HDR” at some point. But what is HDR, and what does it do? Here’s everything you need to know about HDR video, including how it works, the different types of HDR, and which streaming devices and services support HDR.

What Is HDR?

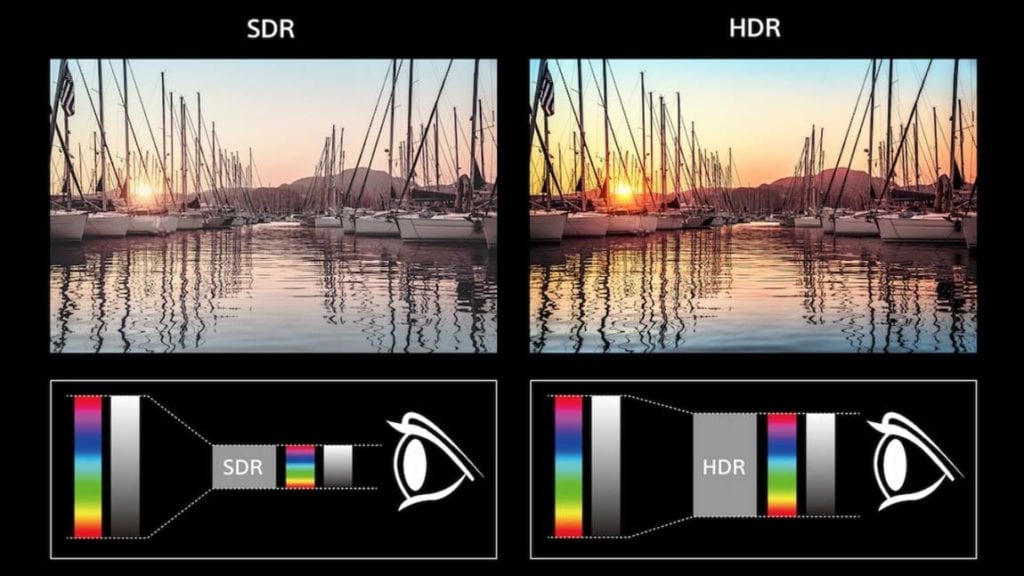

HDR stands for High Dynamic Range. It is a technology that adds details to shadows and highlights of an image. That means you’ll get whiter whites, deeper blacks, and a wider range of colors compared to displays with Standard Dynamic Range (SDR).

In the example above, HDR lets you see the sun’s light beams that would otherwise be “blown out” by the surrounding light with SDR. Also, HDR lets you see more detail in the rocks in the bottom-right that get lost in the shadows with SDR. Finally, the trees on the left become more vivid with HDR, allowing their green color to pop.

What Does HDR Do?

HDR increases the range between the brightest whites and the darkest blacks on a display. With HDR video, content creators have more control over how bright and colorful certain parts of an image can be, so they can replicate what the eye sees in real life.

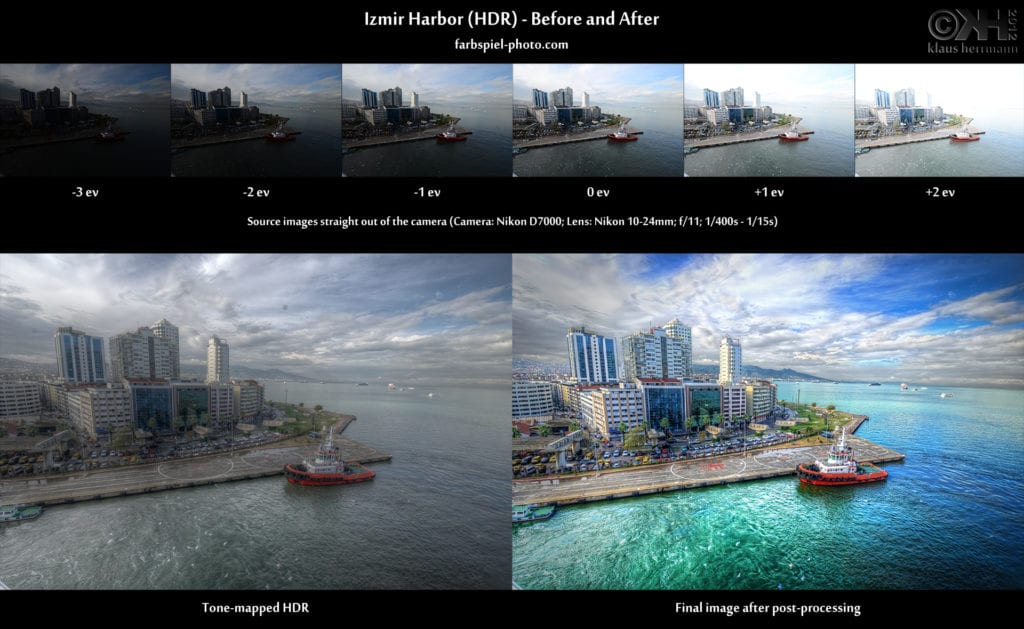

Traditional video cameras only use one exposure at a time to capture an image. That means the brightest parts of an image can be overexposed, while the darkest parts of an image can be underexposed. The underexposed parts of an image will lose detail in the shadows, while the overexposed parts of an image will lose detail in the highlights.

With HDR, video cameras can now capture multiple exposures at the same time, which can be combined in post-production to increase the contrast ratio and color range. This produces a more life-like image because our eyes can see a greater range of light and color than a camera does.

HDR requires a TV to have a high peak brightness. In fact, HDR TVs can get 10 times brighter than SDR TVs or more. However, that doesn’t mean the whole image will be brighter. Instead, content creators will be able to control how bright and dark parts of the image will be.

With most HDR displays, you will also get wide color gamut (WCG) too. This technology lets a TV display more vivid colors with higher saturation, as well as more shades of color in between. The majority of SDR displays use 8-bit color, which can produce up to 256 shades per color or around 16.7 million different colors. On the other hand, HDR uses at least 10-bit color, which can produce over 1 billion colors.

While HDR can improve the quality of images on your TV, not all TVs are created equal. There are many factors that can change the image quality of your display, including how bright it can get, the resolution, and the type of HDR standard your display supports.

The Different Types of HDR Standards

There are three main types of HDR technology you can find in most displays today: HDR10, HDR10+, and Dolby Vision. While HDR10 is the most common standard found in most 4K displays these days, Dolby Vision can get brighter, support more colors, and produce the most consistent image.

If you want to know more about what 4K is, check out our article on the difference between 4K and 1080p here.

What Is HDR10?

HDR10 is the most common HDR standard, so if your TV says it supports HDR, chances are that it at least supports HDR10. Since HDR10 is an “open standard,” and manufacturers don’t have to pay a licensing fee to use the format, the quality of images can vary widely.

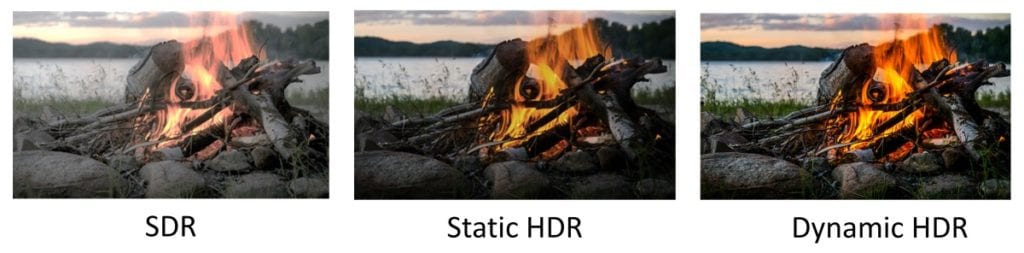

Also, HDR10 uses “static metadata,” which means that it has one set of information to define the brightest and darkest light levels of an entire video. This can be a problem if one scene in a movie is very bright or very dark, and the TV will make the rest of the movie lighter or darker in order to compensate.

What Is HDR10+?

HDR10+ takes all the good parts of HDR10 and improves on them. It has higher levels of brightness and contrast than HDR10, and it supports dynamic metadata. This means the light levels can shift from shot to shot, which leads to more accurate light levels than HDR10.

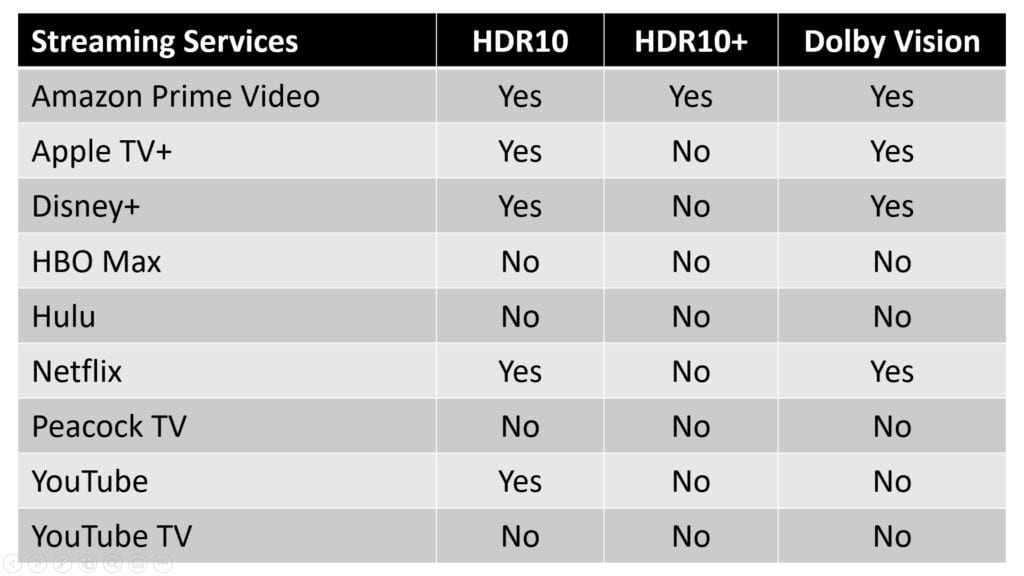

HDR10+ is also open source and royalty-free, but it is not nearly as popular as HDR10. There are only a few TVs and very little content that supports the format. So far, Amazon Prime Video is the only streaming service that supports HDR10+.

What Is Dolby Vision?

Dolby Vision is an HDR standard created and licensed by Dolby, which means it costs display manufacturers money to implement it in their devices. Because of this, Dolby Vision follows more rigid standards than HDR10, so it will produce very consistent images.

Like HDR10+, Dolby Vision uses dynamic metadata, but it can also get much brighter, and it can display more colors than HDR10 and HDR10+. However, most TVs and videos won’t be able to take full advantage of everything the standard offers. To compensate, Dolby Vision takes the capabilities of your display into account and scales content down to those standards. That means you should see the benefits of Dolby Vision, no matter how bright your TV can get.

So, if you’re looking for the best image possible, you might want to opt for Dolby Vision or HDR10+. On the other hand, the majority of HDR content is currently only available for HDR10. However, if you get a TV with Dolby Vision or HDR10+, it will also support HDR10 as well.

What Do You Need to Get HDR?

In order to experience HDR, you will need a TV, monitor, smartphone, or any other type of display that supports the format. You will also need to play HDR-compatible content from a device that supports the format, such as a Blu-Ray player or a compatible streaming service.

What Devices Support HDR?

There are lots of devices that support HDR, including smart TVs, Ultra-HD Blu-Ray players, and almost all 4K streaming devices, including the Roku Streaming Stick 4K, Roku Ultra, Fire TV 4K, Apple TV 4K, Chromecast Ultra, Chromecast with Google TV, and more.

You will usually be able to see if your TV or streaming device supports HDR on the box. In order to get the most out of your HDR TV, you should also use an HDMI 2.1 cable as well.

If you’re in the market for a new streaming device, take our short quiz to find the best streaming device for you.

Which Streaming Services Support HDR?

Most of the major streaming services support some form of HDR these days, including Netflix, Amazon Prime Video, Apple TV+, Disney+, YouTube, and more. There are also a few streaming services that don’t support HDR, including Hulu, HBO Max, Peacock TV, and YouTube TV.

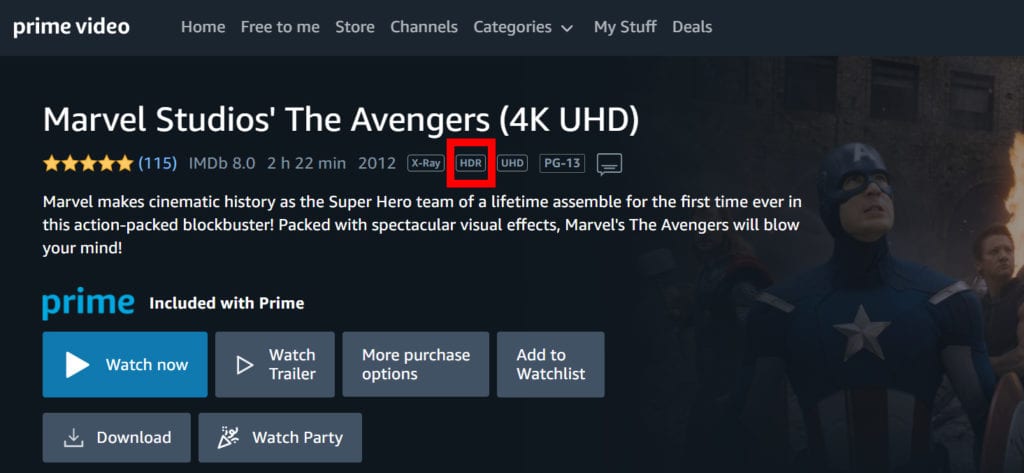

Some streaming services support several different HDR formats, while others only offer HDR content with a premium subscription, like Netflix. It can also be hard to find which movies and shows are HDR compatible, but streaming services will sometimes show a badge icon that says HDR or Dolby Vision in the video’s description.

If you can’t decide which streaming service is right for you, check out our list of the best streaming services here.

How to Turn On HDR

If your TV supports HDR, it will automatically turn on HDR when you start playing compatible content. You will know HDR is on if you see a flag in the top-right corner of your screen. If you’re having any trouble enabling HDR on your TV, check whether your devices and content are all compatible.

You might also want to check your TV’s settings to see if an external device’s HDR support is switched on. Just open your television’s menu and find the settings for external devices. The label for these settings may vary depending on the manufacturer.

If you’re still in the process of finding a new TV to buy, check out our list of the best smart TVs for any budget.

HelloTech editors choose the products and services we write about. When you buy through our links, we may earn a commission.